Introduction

An Industrial Ethernet network consists of multiple types of hosts (such as PC, Server, and SCADA/DCS Controllers) interconnected together by Ethernet switches to communicate with each other. By default, all devices in the network have an equal opportunity to transmit their data to their destinations.

This bus sharing and best–effort nature makes Ethernet not real-time friendly, and hence non-deterministic. In other words, high priority or mission critical data are delivered with random latency or even failed during transmission. An intelligent switched Ethernet network, with proper design, configuration, and management, can be deterministic to run real-time applications. One of the key features of an intelligent switch necessary to offer deterministic performance is QoS priority.

This paper will show QoS Priority technical details and explain how it operates on an intelligent Ethernet switch.

Quality of Service (QoS)

QoS - PriorityQoS is defined by the IEEE standard 802.1P protocol, of Layer 2 and Layer 3 components. In this paper, we will only discuss the Layer 2 component and the features of QoS of the TC JumboSwitch Based Priority and Queue Scheduling. These two features incorporated with Virtual LAN (VLAN) provide reliable method of assuring quality service of data packets within an Ethernet network.

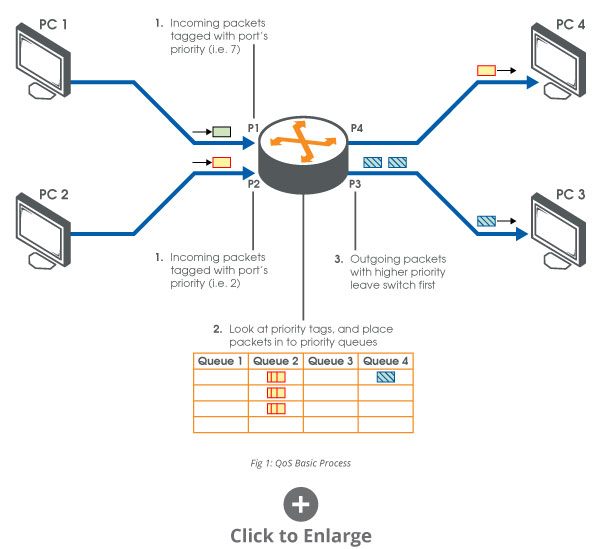

Priority ProcessThe priority process is integrated process as specified in the standard. QoS priority factors into the Ingress and Internal Processing steps, step is solely determined by VLAN. Below are brief descriptions of the additions priority incorporates process.

- Ingress: All data packets received by a VLAN- and Priority-supported switch are "tagged" with a 2-Byte tag containing the 3-bit priority number. This priority ranges from 0 to 7. The 2-Byte tag is the same tag that the VLAN process adds to an incoming packet, which is why Priority and VLAN are connected.

- Internal Processing: The Switch looks at the priority tagged to the packet and decides to which one queue, out of multiple queues, to place the packet in. Then packets are emptied out of the queues, which are in a predetermined order, for forwarding.

- Egress: Thus, packets with a higher priority will be forwarded out of the switch before those with lower priorities.

Figure 1 shows these three steps visually. The steps listed above are only the basic process of how QoS works. The two features mentioned previously are configurations that can be set by users to specify more exact processing of packets received by the switch. These configurations will be described in the next section.]

Configuring a VLAN and Priority-Supported Switch

As described above, the basic process can be divided into three different processes: ingress, internal, and egress. For QoS Priority, the Ingress and Internal Processing steps can be configured to perform more specific actions. This section describes these configurations in their use and effect on the switch

Ingress - Port PriorityThere is one configuration that affects the ingress step in QoS: Port Priority. Each port has a Default Priority assigned to it. This enables users to set this value from 0 to 7.

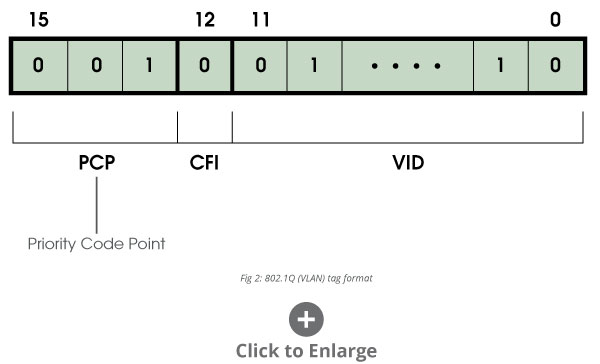

When a packet comes into a switch, the switch checks each packet for an existing VLAN tag, which contains a priority code. A VLAN tag is a 2-Byte tag with the following format: bits 15 to 13 contain the priority code, bit 12 is a CFI bit set to 0 for Ethernet switches, and bits 11 to 0 contain the VLAN ID. This format is illustrated in Figure 2 below.

If the packet is not already tagged (untagged), the switch will add a tag with the priority being that of the receiving port's Default Priority. Otherwise, the packet is left without being modified. (See Table 1)

Table 1: Ingress Tagging Action

| VLAN Tag Check | Action |

| Packet is Tagged | Do no modify packet |

| Packet is untagged | Tag packet with Default Priority for receiving port |

The internal process of QoS is essentially how the switch determines which packets should be forwarded first before carrying out the forwarding decision of the switch. For example, packets containing tags with priority 6 will be forwarded before those packets with priority 2.

The internal processing step in QoS is performed using the priority of a packet and deciding which priority queue to place the packet in. A switch that supports QoS can have any number of priority queues; the number depends on the hardware. For example, TC JumboSwitch has four priority queues.

The 802.1p standard states which priorities belong to which queues. And, the higher the queue number, then higher priority those queues are in the switch.

For switches with four queues:

- Queue #4: for priorities 6 and 7

- Queue #3: for priorities 4 and 5

- Queue #2: for priorities 0 and 3

- Queue #1: for priorities 1 and 2

Users can configure the rule by which these priority queues are emptied. In other words, the order in which packets are forwarded to their outgoing ports can be set by the user. This configuration is called Queue Scheduling.

Queue SchedulingQueue Scheduling supported on TC's JumboSwitch are Strict Scheduling and Weight Round Robin (WRR) Scheduling.

- Strict Scheduling: All higher priority queues are emptied before lower priority queues.

- Weighted Round Robin Scheduling: All queues have an opportunity to empty each round. But during each round, higher priority queues empty more packets than lower priority queues. In the case of TC products that support this type of scheduling, Table 2 shows the rate each queue gets emptied.

Table 2: Weighted Round Robin Scheduling

| Queue | Weight |

| 1 | 8 Frames per Round |

| 2 | 4 Frames per Round |

| 3 | 2 Frames per Round |

| 4 | 1 Frames per Round |

Egress

The QoS process only affects packets processed during Ingress and Internal Processing. And, as mentioned before, the Egress is solely dependent on the configuration of the VLAN settings. Thus, if a port is configured to remove the VLAN the packet leaving the switch will also be stripped of its priority. This means that the packet may not be forwarded at the same priority within the next switch it reaches. If a port is configured to leave the packet tagged when leaving the switch, then the packet is going to be forwarded at the same priority within the next switch it reaches.

Real-Time PerformanceFor real-time Ethernet applications, the following worse case scenarios may need to be considered:

If Strict Scheduling is selected as the queue scheduling scheme, a high priority packet may enter the output port when the transmission of another Ethernet packet with maximum packet length (1518 bytes) has just started. As a result, the high priority packet will then have to wait until the preceding packet is fully transmitted. In this instance, a typical worse case delay caused by the maximum length packet will be 122µs in a 100 Mbps switch, and 12.2µs in a 1Gbps switch.

In a worse case scenario with Weighted Round Robin (WRR), there could be as many as 7 frames that need to be transmitted before the high priority packet. In this instance, the typical worse case delay will be 854µs (122µs*7) in a 100 Mbps switch and 85.4µs in a 1Gbps switch.

Priority and Queue scheduling configuration with JumboSwitch

The JumboSwitch is an industrial grade Multi-Service Access Platform (MSAP) Ethernet Switch that can be configured as a fiber self-healing ring or star network. Interfaces include Ethernet, T1/E1, T3/E3, RS232/422/485, and VoIP (FXS/FXO). To assist switch configuration and network management with prioritization requirements, the JumboSwitch provides port-based priority and queue scheduling functions that can be accessed through its WebUI. Following are two screens that show Priority and Queue Scheduling setting respectively.

Summary

An Ethernet network innately provides a reasonable chance for all packets transmitted within the network to reach their destination. However, this does not provide assurance that packets will reach their destinations within the time they are expected to. The Layer 2 solution for this issue is Quality of Service, which provides priorities for these packets. This will ensure that more critical traffic will have precedence in a given network compared to less important traffic.

The increasing demand of industrial applications on Ethernet means that more mission critical data will be running on shared networks. As a result, the limited bandwidth has to be properly utilized and managed in a way that high priority data can be successfully delivered on time. Ethernet switches with QoS features can be used for data traffic prioritization to ensure on-time delivery. Together with other switch functions, such as proper network planning, implementation, and management, a well-constructed intelligent switched Ethernet is capable of being truly deterministic.

Ask a question